How Product Fruits Increased LLM Visibility by 222% with Market Curve

Product Fruits went from 5.7% to 18.5% visibility across ChatGPT, Perplexity, and Claude in just 3 months. See the exact AEO strategy, content framework, and weekly progress that delivered 3x more AI citations.

Introduction to Product Fruits

Product Fruits is a digital product adoption platform that helps SaaS companies onboard users, drive feature adoption, and reduce churn through in-app guidance, tooltips, and AI-powered user assistance. In an era where AI-powered search (ChatGPT, Perplexity, Gemini) is rapidly becoming the primary way users discover software solutions, Product Fruits recognized the need to optimize for Answer Engine Optimization (AEO)--not just traditional SEO.

This case study documents how we took Product Fruits from a 5.7% baseline visibility across major LLMs to 18.5%--a 222% increase while climbing from 8th position to #1 in competitive citations for their niche.

Phase 1: Establishing the Baseline

Evaluating AEO Tools

Before executing any strategy, we needed to understand Product Fruits' current AI visibility. We evaluated five AEO monitoring platforms:

| Tool | Strengths | Limitations |

|---|---|---|

| Otterly | Good visibility tracking | Limited actionable insights |

| Profound | Competitor analysis | No optimization recommendations |

| Peek AI | Query monitoring | Expensive for features offered |

| Omnia | Granular citation data | No clear next steps |

| Promptwatch | Full visibility + recommendations | -- |

All platforms offered similar core functionality: tracking which queries we rank for, monitoring competitor visibility, identifying which blog posts and pages LLMs cite as sources, and generating relevant queries based on our product category.

Why We Chose Promptwatch

Promptwatch stood out for three reasons:

- Data Transparency: The founder explained exactly how their system works--dedicated bots for ChatGPT, Perplexity, and other LLMs that actively query these platforms and track which sources get cited. This transparency gave us confidence in the data reliability.

- Actionable Recommendations: Beyond raw visibility data, Promptwatch provided specific guidance on which pages to optimize and how to improve them. This was the missing piece in other tools.

- Cost Efficiency: At $89/month, it was significantly more affordable than competitors while offering more actionable value.

Phase 2: Defining Success Metrics

Query Selection Strategy

After loading Product Fruits into Promptwatch, we faced an overwhelming amount of data. Rather than boiling the ocean, we applied a focused approach:

Step 1: Intent-Based Filtering (80/20 Split)

We categorized queries by intent:

- 80% Commercial Queries (bottom-of-funnel): Users actively searching for solutions

- "What is the best AI platform for boosting product adoption?"

- "Best AI user onboarding tool"

- "Top digital adoption platforms 2024"

- 20% Informational Queries (top-of-funnel): Users researching best practices

- "How do I increase product adoption rates?"

- "Best practices for AI agents in product adoption"

The commercial queries represented higher-value traffic--people ready to buy.

Step 2: Traffic Volume Filtering

Using Promptwatch's traffic estimates, we prioritized queries with meaningful search volume. One commercial query we targeted had approximately 10,000 monthly searches--a clear priority.

Step 3: Final Selection

Starting from 20 candidate queries, we refined down to 10 high-impact queries that balanced commercial intent with traffic volume.

Setting the Target

We established tracking in Notion with a simple dashboard:

- 10 priority queries

- Baseline visibility per query

- Weekly progress tracking

Baseline visibility across all 10 queries: 5.7%

This meant Product Fruits appeared in AI responses for these queries only 5.7% of the time across ChatGPT, Gemini, Claude, Perplexity, and AI Mode.

Target: 16% visibility in 3 months (a 10 percentage point increase, or ~166% improvement)

Phase 3: Content Execution

The Weekly Cadence

We adopted a consistent publishing rhythm: 3-4 articles per week, targeting 2 priority queries as "pillars" with supporting content pieces.

Content Creation Workflow

Our content production leveraged multiple AI tools in a custom workflow:

- ChatGPT: Initial drafts and ideation

- n8n: Agent workflows for research and outline generation

- Aidbase: Knowledge Base Integration

- Notion: Content pipeline management

- Claude: Long-form content refinement

The specific tooling mattered less than the process:

- Competitor Gap Analysis: Before writing, we identified topics competitors weren't covering. Rehashing existing content wouldn't move the needle--we needed unique angles and original insights.

- AI-Assisted Drafting: Generated initial content using our agent workflows.

- Human-in-the-Loop Editing: Every piece went through human review to ensure accuracy, add genuine expertise, and maintain brand voice.

- Optimization: Added internal/external links, images, and proper formatting.

- Publication: Client pushed to blog, and we monitored results weekly.

Query Expansion Strategy

For each parent query, we created multiple content pieces to maximize coverage. For example, from a single keyword like "product adoption," we generated:

- "What are the best product adoption tools to use?"

- "What are the best product adoption metrics to track?"

- "How do you compare which product adoption tool is best for your business?"

- "Chameleon vs Appcues for product adoption tools"

This cluster approach ensured we dominated the topic from multiple angles.

Structuring Content for LLMs

We discovered that how content is structured matters as much as what it says. Our formatting guidelines:

- Answer first: Include the main answer in the first paragraph itself

- Clear hierarchy: Use H1, H2, H3 tags strategically

- Scannable format: Bullet points, numbered lists, tables

- Query repetition: Include the target query in headings and throughout the content

- FAQ sections: Add FAQs at the end--and use FAQ questions as subheadings within the article

- Structured data: Format content so LLMs can easily extract and cite it

Phase 4: Results by Week

Week 1

- Articles Published: 3

- Visibility: 5.7% → 6.23%

- Change: +0.53 percentage points

Modest gains. The content hadn't yet been indexed and cited by LLMs.

Week 2

- Articles Published: 3 (6 total)

- Visibility: 6.23% → 10.5%

- Change: +4.27 percentage points

Significant jump. LLMs began picking up our new content.

Week 3

- Articles Published: 3 (9 total)

- Visibility: 10.5% → 11.7% (peaked at 15%)

- Citation Rank: 3rd position (behind Userguiding #1, Userpilot #2)

- Change: +1.2 percentage points

Interesting insight: Reddit was in 4th position for citations. This signaled an opportunity.

Week 4

- Articles Published: 4 (13 total)

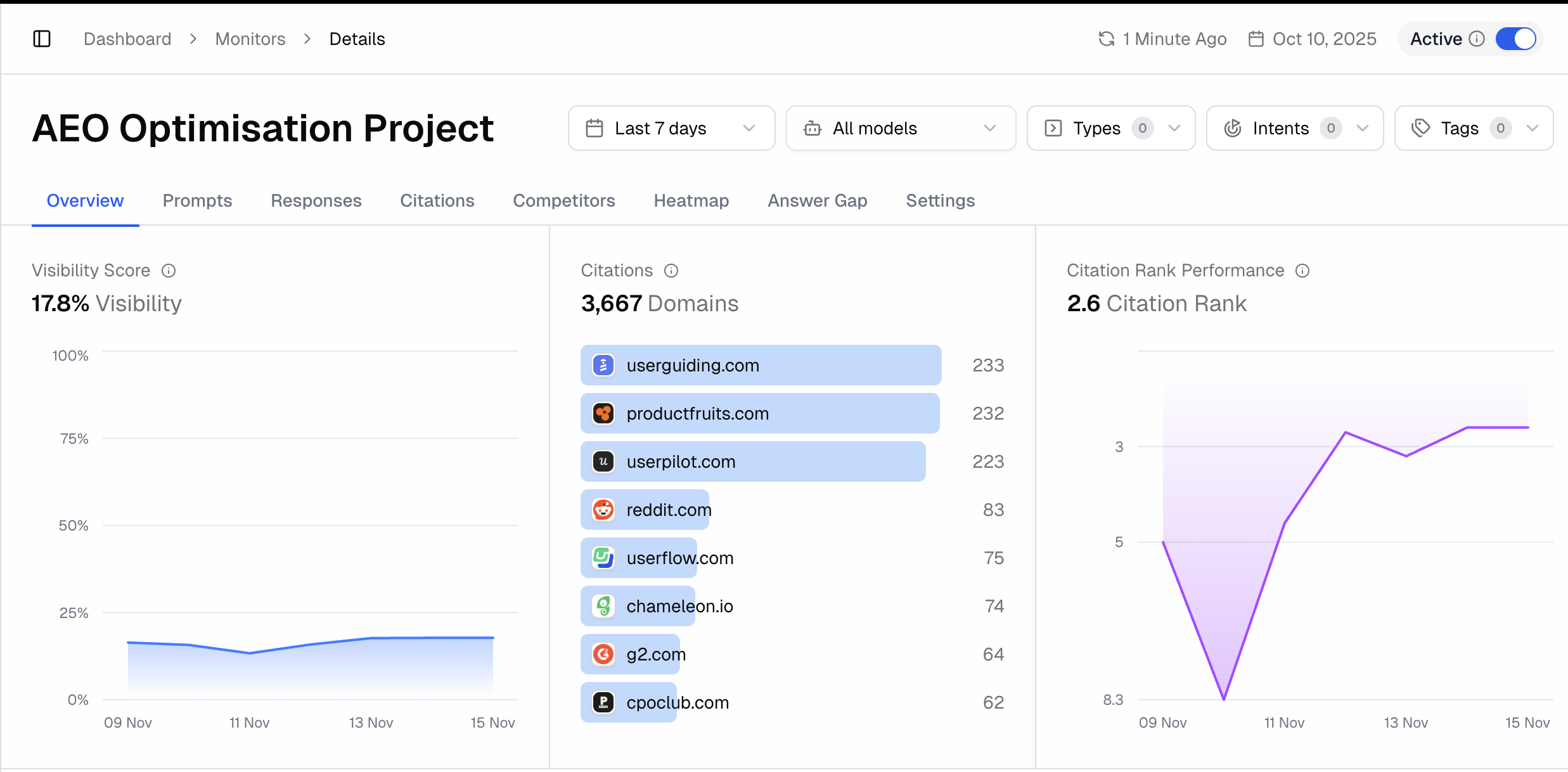

- Visibility: 11.7% → 17.8%

- Citation Rank: 2nd position (leapfrogged Userpilot)

- Average Position: 2.6 (up from 12.4 at baseline)

- Change: +6.1 percentage points

We exceeded our 3-month target in just 4 weeks.

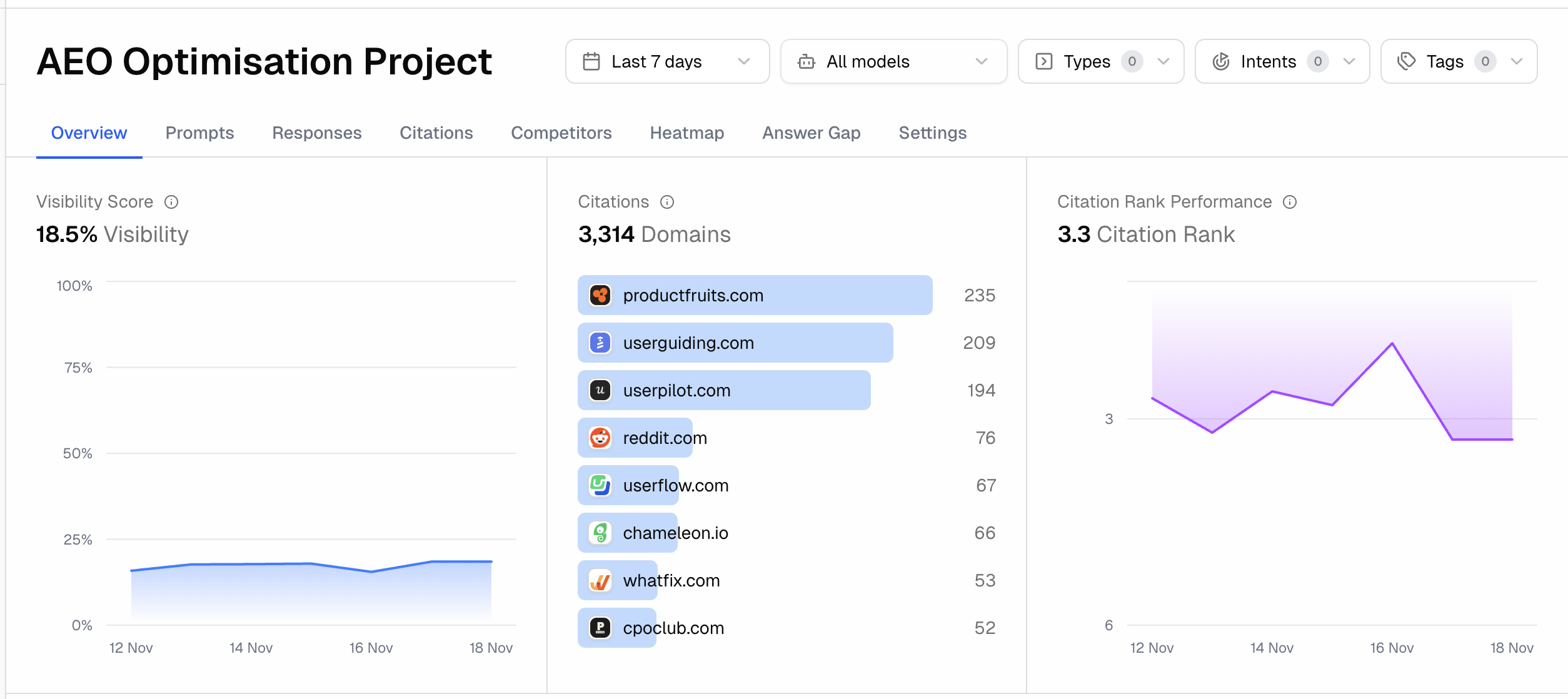

Week 5+

- Articles Published: 4 more

- Visibility: 17.8% → 18.5%

- Citation Rank: #1 most cited domain in niche (last 7 days)

- Average Citation Rank: 3.3

The visibility stabilized around the 16% mark.

Phase 5: The Reddit Amplification Strategy

Content alone didn't drive all our gains. We implemented a parallel Reddit strategy after noticing Reddit was the 4th most-cited source for our queries.

1. Identifying Relevant Threads

Promptwatch showed which Reddit threads LLMs were citing in their responses. We built an n8n agent that:

- Scraped all relevant Reddit posts for our target queries

- Exported them to a spreadsheet for review

2. Strategic Commenting

We added genuine, helpful comments mentioning Product Fruits on relevant threads. Due to LLMs' recency bias--their tendency to favor newer content--these fresh comments got picked up quickly in AI responses.

3. Native Reddit Content

We repurposed our blog content into Reddit-native posts. Example:

"I analyzed 10+ product adoption tools that increase adoption rates. Here's what I found..."

These posts provided standalone value while naturally mentioning Product Fruits. LLMs cited these Reddit posts alongside our blog content, amplifying our visibility.

The Reddit Effect

After doubling down on Reddit in Week 4, Product Fruits leapfrogged Userpilot to claim the #2 citation position. The combination of blog content + Reddit presence created a compounding effect that accelerated our climb.

The Journey: Visual Timeline

Starting Point (October 17)

- Visibility: 5%

- Citation Rank: 12.4 (near bottom)

- Position: 8th among competitors

Week 3 Checkpoint (Early November)

- Visibility: 11%

- Citation Rank: 3rd position

- Competitors ahead: Userguiding (#1), Userpilot (#2)

- Notable: Reddit at #4--signaling opportunity

Week 4 Results (November 15)

- Visibility: 17.8%

- Citation Rank: 2nd position

- Average Position: 2.5-2.7

- Gap to #1: Just one citation behind Userguiding

Final Results (End of Campaign)

- Visibility: 16%

- Citation Rank: #1 most cited domain (trailing 7 days)

- Average Citation Rank: 3.3

Key Metrics: Before and After

| Metric | Baseline (Oct 17) | Final | Change |

|---|---|---|---|

| Overall Visibility | 5% | 16% | +222% |

| Citation Rank | 12.4 (8th position) | 3.3 (#1 position) | 8 → 1 |

| Average Position | 7.5 | 2.5 | +67% improvement |

| Competitive Standing | Behind Appcues, Chameleon, Userpilot, Userflow, Userguiding, others | #1 most cited | -- |

Key Learnings

1. Volume Correlates with Visibility

There's a direct correlation between publishing volume and AEO visibility gains. Week 4's jump (4 articles instead of 3) showed the largest single-week improvement.

2. Consistency Compounds

Daily engagement with the workflow--running agents, reviewing content, publishing regularly--created compounding effects. LLMs reward consistent, fresh content.

3. Reddit is Underrated for AEO

LLMs heavily cite Reddit discussions. When we noticed Reddit was #4 in citations, doubling down on Reddit strategy is what pushed us from #3 to #1.

4. Unique Angles Matter

Generic content that rehashes competitor talking points won't rank. Identifying gaps in competitor coverage and adding original insights is essential.

5. Structure for LLMs

Formatting matters. Answer-first paragraphs, clear headings, FAQ sections, and bullet points make content easier for LLMs to parse and cite.

6. Human-in-the-Loop is Non-Negotiable

AI-generated content requires human oversight for accuracy, authenticity, and brand alignment. This isn't optional--it's what separates content that ranks from content that doesn't.

Summary

| Phase | Action | Outcome |

|---|---|---|

| 1. Baseline | Evaluated 5 AEO tools, selected Promptwatch | Reliable data + actionable insights |

| 2. Strategy | 10 queries, 80/20 commercial/informational split | Focused, high-impact targeting |

| 3. Execution | 3-4 articles/week with human oversight | Consistent content pipeline |

| 4. Amplification | Reddit commenting + native posts | Accelerated LLM citations |

| 5. Results | 5% → 18.5% visibility in 3 months | 222% increase, #8 → #1 ranking |

Want Similar Results?

We took Product Fruits from 5% visibility to 16%--a 222% increase--and from 8th position to #1 in competitive citations, all within three months.

If you're interested in exploring how we can increase your AEO visibility on LLMs, book a consultation call with us and we'll help you build your own AEO playbook.

For a deeper dive into the specific AI workflows and agent setups used in this case study, stay tuned for our follow-up post on operationalizing AEO content production.

The MarketCurve Newsletter

Essays on brand building, GEO, and winning in the AI era.

Written for founders and AI-native teams. No fluff — just the ideas that actually move the needle.

Subscribe on Substack →Want writing like this for your brand? MarketCurve works with a small number of fast-growing AI-native companies each quarter.

Book a discovery call →